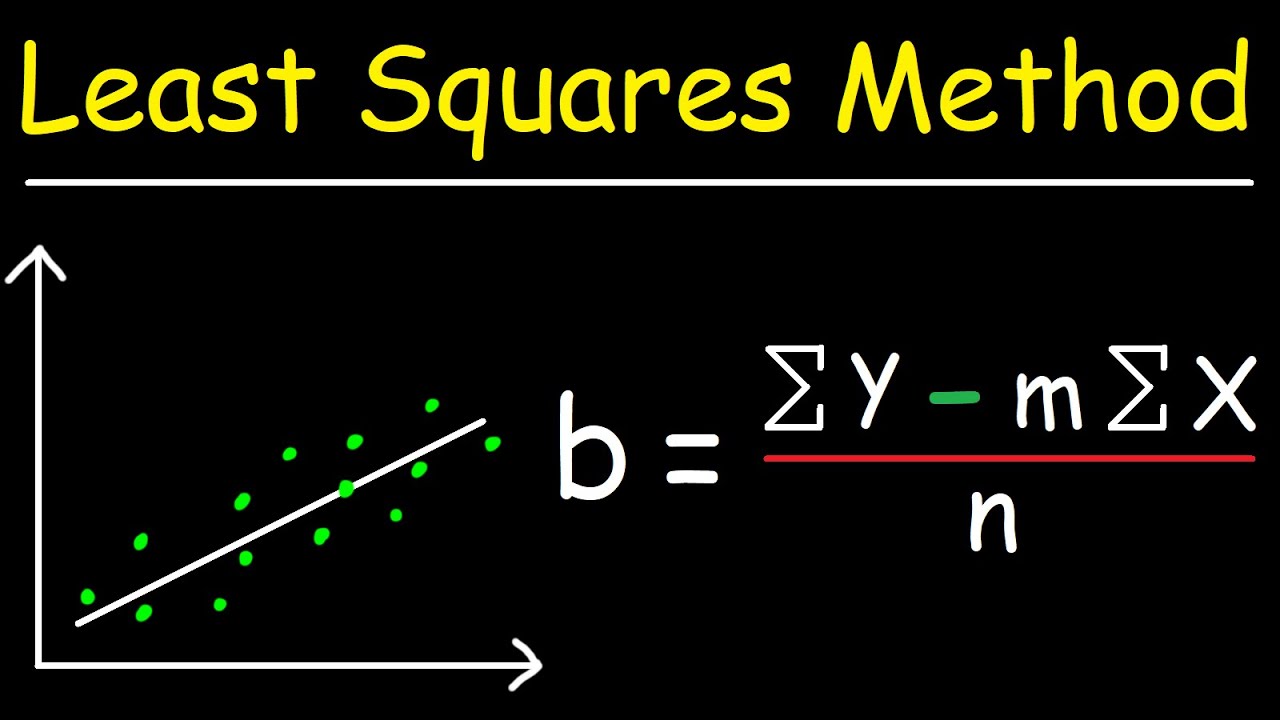

Linear regression was the first type of regression analysis to be studied rigorously, and to be used extensively in practical applications. Like all forms of regression analysis, linear regression focuses on the conditional probability distribution of the response given the values of the predictors, rather than on the joint probability distribution of all of these variables, which is the domain of multivariate analysis. Most commonly, the conditional mean of the response given the values of the explanatory variables (or predictors) is assumed to be an affine function of those values less commonly, the conditional median or some other quantile is used. In linear regression, the relationships are modeled using linear predictor functions whose unknown model parameters are estimated from the data. If the explanatory variables are measured with error then errors-in-variables models are required, also known as measurement error models. This term is distinct from multivariate linear regression, where multiple correlated dependent variables are predicted, rather than a single scalar variable. The case of one explanatory variable is called simple linear regression for more than one, the process is called multiple linear regression. In statistics, linear regression is a statistical model which estimates the linear relationship between a scalar response and one or more explanatory variables (also known as dependent and independent variables). A square e² will turn all the negative residuals into positive ones.Statistical modeling method Part of a series on And to capture both the positive and negative deviations, we will need to take the sum of e² instead of e. So, now we need to sum up all the individual residuals. To assess the whole linear model, determining the residual of a single data point is not enough since you will probably have many data points. Hence, according to the equation above, the residual, e, is 7 − 6 = 1. However, according to the model, the ŷ, the predicted value, is 2 × 2 + 2 = 6. One of the actual data points we have is (2, 7), which means that when x equals 2, the observed value is 7. We can calculate the residual as:įor instance, say we have a linear model of y = 2 × x + 2. Theory aside, let's dive into how to calculate the residuals in statistics to help you understand the process now.Īs we mentioned previously, residual is the difference between the observed value and the predicted value at one point. This is when we need to calculate the sum of squared residuals to prevent the positive value from being offset by the negative residuals. However, to assess the performance of the whole linear model, we need to sum all the residuals up. The further away the residual is from zero, the less accurate the model is in predicting that particular point. If the predicted value is larger than the observed value, the residual is negative. If the observed value is larger than the predicted value, the residual is positive.

The residual definition is the difference between the observed value and the predicted value of a certain point in the model. And this is where the calculation of the residual comes in. The next vital step to take is to estimate the accuracy of your linear model. Let's say you have now modeled a linear relationship between y and x using linear regression. Please visit our quadratic regression calculatorand exponential regression calculator. If your data can't be explained by using just a straight line, you might want to try out other regression methods. However, it is important that you understand not all relationships are linear. If the expected GDP growth of the following year is 10%, stock price of Company Alpha is: Let's say we model the stock price of Company Alpha using the following model: For example, we can use linear regression to predict future stock prices. Linear regression is a very powerful tool as it can help you to predict the "future". The second parameter b is the intercept and it is the value of y when x equals zero. It controls the change in y per unit change in x. Specifically, it models the change in y for any changes in x. Linear regression aims to explain the relationship between y and x.

Where y is the dependent variable, whereas x is the independent variable. Linear regression is a statistical approach that attempts to explain the relationship between 2 variables.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed